Before we dive into the wonderfully chaotic world of non-deterministic behavior in LLMs, let’s recap where we left off. In Part 1 of our “Training LLMs to Do What You Want” series, we unpacked the foundational principles of autonomous prompt engineering in large language models, focusing on how to guide deterministic behavior in an LLM (Large Language Model). We discussed how to train LLM for specific tasks by fine-tuning prompts, controlling temperature and seed values, and implementing structured strategies to achieve consistent and predictable outputs. This groundwork is essential for anyone looking to build reliable systems using large language models (LLMs). If you missed it, be sure to check out Part 1—it sets the stage for understanding the unpredictable magic of non-deterministic behavior we’ll be exploring next.

Unpredictability in Large Language Models

Large Language Models (LLMs) are inherently unpredictable, making them both fascinating and challenging to work with. This unpredictability stems from the complex neural networks and vast amounts of data they’re trained on. When you interact with an LLM, you’re essentially engaging with a system that has learned patterns from millions of texts, but processes each prompt uniquely.

The non-deterministic nature of LLMs means that even with the same input, you might receive different outputs each time. This variability can be attributed to several factors:

- Randomness in token selection

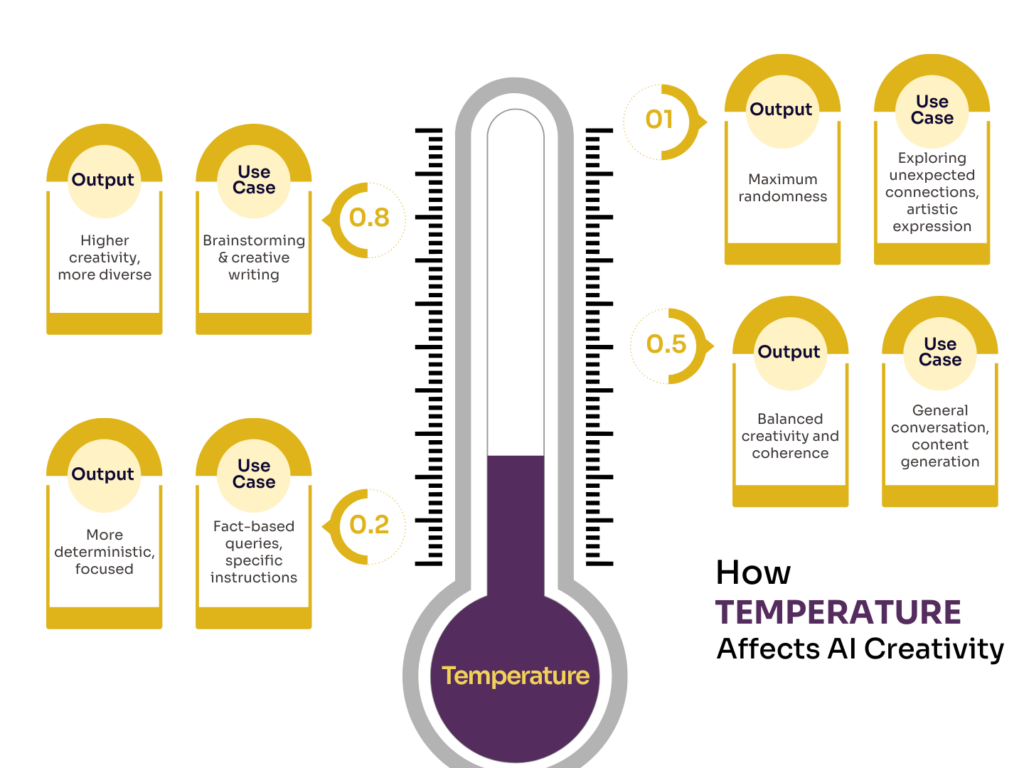

- Temperature settings

- Contextual interpretation

- Training data biases

Let’s break these down in a table to understand their impact on LLM behavior:

Understanding these factors is crucial when you’re working with LLMs, as they directly influence the consistency and reliability of the outputs you receive.

For example, here’s a small illustration of the impact of temperature on output variability:

Benefits of diverse and creative outputs

The non-deterministic behavior of LLMs isn’t always a drawback. In fact, it can be a significant advantage in many scenarios. Here are some key benefits you can leverage:

The non-deterministic nature of LLMs, while challenging in some aspects, offers significant advantages that can enhance your AI-driven applications and workflows. Let’s explore the benefits of diverse and creative outputs that stem from this unpredictability.

Enhanced creativity and ideation

One of the most significant advantages of non-deterministic behavior in LLMs is their ability to generate novel and creative ideas. By producing diverse outputs for similar inputs, these models can serve as powerful brainstorming tools. You can use them to:

- Generate multiple unique storylines for creative writing projects

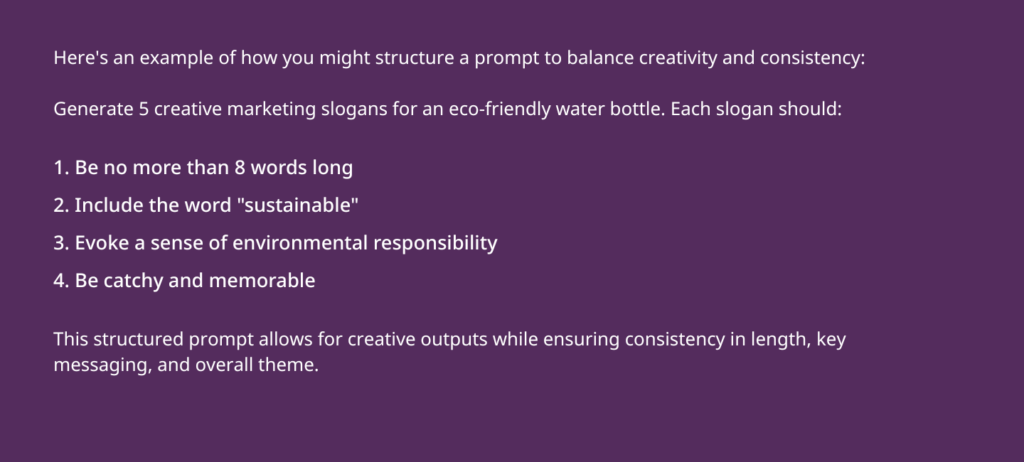

- Develop innovative product concepts or marketing slogans

- Explore various solutions to complex problems in fields like engineering or design

Improved natural language interactions

The ability to generate diverse responses makes LLMs more adept at engaging in natural, human-like conversations. This diversity helps in:

- Creating more engaging chatbots that don’t sound repetitive

- Developing virtual assistants that can adapt their communication style

- Simulating realistic dialogue for language learning applications

Personalization and adaptability

Non-deterministic behavior allows LLMs to tailor their outputs to different users or contexts more effectively. This adaptability is beneficial for:

- Customizing content recommendations based on user preferences

- Generating personalized learning materials in educational applications

- Adapting the tone and style of written content for different audiences

Robustness against adversarial attacks

The unpredictability in LLM outputs can make them more resistant to certain types of adversarial attacks. By not always producing the same response to a given input, these models become harder to manipulate or exploit systematically.

Exploration of alternative perspectives

When tackling complex issues or analyzing scenarios, the diverse outputs of LLMs can help you explore multiple viewpoints. This is particularly useful for:

- Conducting thought experiments in fields like philosophy or ethics

- Analyzing potential outcomes in strategic planning or risk assessment

- Generating diverse hypotheses in scientific research

Enhanced problem-solving capabilities

By generating multiple approaches to a problem, non-deterministic LLMs can help you discover innovative solutions that might not be immediately apparent. This can be invaluable in fields such as:

- Software development, where multiple algorithmic approaches can be explored

- Business strategy, where various market entry or growth strategies can be considered

- Scientific research, where diverse hypotheses can be generated for testing

Serendipitous discoveries

The unexpected connections and ideas generated by LLMs can lead to serendipitous discoveries. This can be particularly beneficial in:

- Scientific research, where novel connections between disparate fields can be uncovered

- Art and literature, where unique combinations of ideas can inspire new works

- Innovation and entrepreneurship, where unconventional ideas can lead to breakthrough products or services

Risks associated with non-deterministic responses

While the unpredictability of LLMs can be advantageous, it also comes with its share of risks that you need to be aware of:

- Inconsistency in outputs: The same prompt might yield different results each time, which can be problematic in applications requiring consistent responses.

- Potential for misinformation: The model might generate plausible-sounding but incorrect information, especially when pushed beyond its training boundaries.

- Bias amplification: Existing biases in the training data can be randomly emphasized in non-deterministic outputs, leading to unfair or discriminatory responses.

- Lack of controllability: It can be challenging to guide the model towards specific desired outputs consistently.

- Reduced reliability in critical applications: In fields like healthcare or finance, where accuracy is crucial, non-deterministic behavior can be a significant liability.

To mitigate these risks, you need to implement robust testing and validation processes. This might include:

- Running multiple iterations of the same prompt to identify inconsistencies

- Cross-referencing LLM outputs with reliable sources

- Implementing human oversight for critical applications

- Using bias detection and mitigation techniques

Balancing creativity and consistency

Finding the right balance between the creative potential of non-deterministic behavior and the need for consistency is a key challenge in working with LLMs. Here are some strategies you can employ to strike this balance:

- Adjust temperature settings: By fine-tuning the temperature parameter, you can control the level of randomness in the model’s outputs. Lower temperatures lead to more predictable responses, while higher temperatures encourage creativity.

- Use structured prompts: Provide clear, detailed prompts to guide the model towards more consistent outputs while still allowing for some creativity.

- Implement post-processing: Apply filters or rules to the LLM’s outputs to ensure they meet specific criteria or fall within acceptable ranges.

- Combine deterministic and non-deterministic approaches: Use deterministic methods for parts of your application that require consistency, and leverage non-deterministic behavior for creative tasks.

- Iterative prompting: Start with a broad prompt and gradually refine it based on the LLM’s responses to achieve a balance between creativity and specificity.

To illustrate how these strategies can be applied across different use cases, consider the following table:

| Use Case | Creativity Need | Consistency Need | Balancing Strategy |

|---|---|---|---|

| Creative Writing Assistant | High | Medium | High temperature, human curation |

| Customer Service Chatbot | Medium | High | Ensemble method, strict prompt engineering |

| Educational Content Generator | Medium | High | Domain-specific fine-tuning, fact-checking |

| Product Description Generator | High | Medium | Adaptive system with user feedback |

| Legal Document Analyzer | Low | Very High | Low temperature, extensive human oversight |

As we’ve unraveled the unpredictable nature of non-deterministic behavior in large language models (LLMs), it’s clear that this chaos isn’t a bug—it’s a feature. Whether you’re chasing creativity, simulating human-like conversation, or exploring new frontiers in autonomous prompt engineering in LLMs, mastering this variability is key. Now that you understand both the promise and the pitfalls, you’re not just training LLMs—you’re shaping intelligent systems to think beyond the script. The question is: are you ready to embrace the unpredictability and make it work for you?